Hi All

One of the founding members of the Cisco UCS Avengers , Fabricio Grimaldi (who also happens to be the Cat who first introduced me to Cisco UCS) came up with a great question.

“How does HA (Split Brain avoidance) work with UCS Manager integrated Rack Mounts when there is no Chassis and therefore no SEEPROM.”

Great question and one I had to admit I did not know the answer to.

Luckily another 2 Cisco UCS Avengers founding members were also CC’d in on the question Scott Hanson and Sean McGee and it was Sean who came back with the answer.

But before we get into the answer a quick recap on how this works in a B Series environment

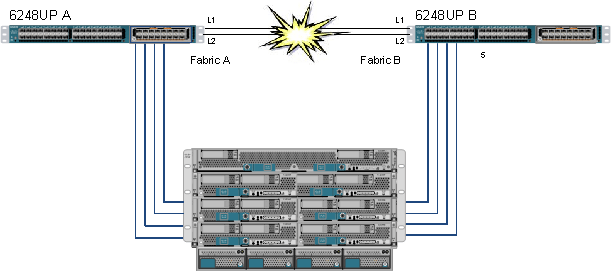

If you are ever in the unlikely scenario that both of your Fabric Interconnect cluster links fail (L1 and L2) the Active UCS Manager remains active but the standby UCS Manager no longer sees heart beats from the Active UCS Manager and as such would try to go active resulting in two isolated active brains. This is referred to as “Split Brain” or a “Partition in Space”

Luckily the smart folks in Cisco anticipated this and added something that prevents this from happening.

There is a Serial EPROM (SEEPROM) on the mid-plane of each Cisco UCS Chassis that is used as shared storage and updated by both Fabric Interconnects, so each can be aware that the other FI is still active by checking the SEEPROM for updates from the other FI.

In this scenario both FI’s will go into standby state and try and claim as many Chassis as possible and the FI that claims the most chassis will promote itself to active.

In order to prevent a tie breaker i.e. both FI’s claiming the same amount of chassis, again there is a mechanism in place to prevent this from happening.

If there is an odd number of chassis in the UCS Domain, no problem as one FI will always claim more than the other.

If there is an even number of chassis then there is a potential for a tie breaker. So each chassis is designated whether it can be claimed or not in the event of a Split Brain; these “claimable” chassis are designated as Quorum Chassis and their SEEPROMs are marked as such.

The UCS Domain always ensures there is an odd number of Quorum chassis, i.e. if there is an odd number of chassis then all chassis SEEPROMs will be marked as Quorum chassis if there is an even number of Chassis then all but one will be designated as Quorum chassis to ensure an odd number.

OK hope that’s all clear.

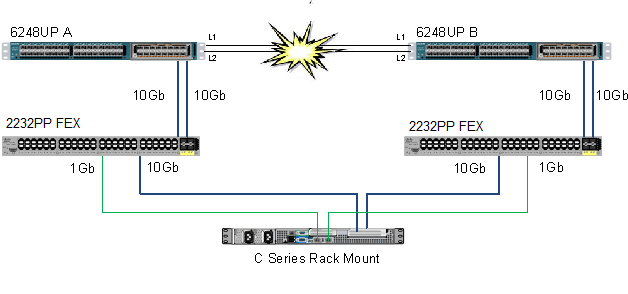

So coming back to the main question in the opening paragraph, how the heck does this work in a UCS Manager Integrated C Series Rack Mount server environment that do not have a SEEPROMs?

Well…… Tune in next week, same UCSguru time, same UCSguru channel, OK Just kidding

I’m sure we are all familiar with the diagram below, which shows the current method of integrating a UCS C Series Rack Mount server into a UCS Manager Domain (It gets simpler in UCSM 2.1 as you only need the 10Gb connections from the server) In essence it is an exploded chassis with external FEX’s and Compute Nodes, but one thing is missing from this exploded chassis……. Yes that’s right the SEEPROM.

OK so while a C Series Rack Mount does not have a SEEPROM they do have a Cisco Integrated Management Controller (CIMC), previously referred to as a Baseboard Management Controller (BMC)

In a Mixed environment of Chassis and Rack Mounts a file in /mnt/jffs2 on the CIMC of the Rack Mount has the same layout as the SEEPROM in a chassis (There was an update to UCS Manager to recognise these “fake” SEEPROMs

The below output shows a UCS System that is using both Chassis and Rack Mounts as shared storage to prevent Split Brain

UCS-DEMO-A# show cluster extended-state

Cluster Id: 0x70af642e8d7811e1-0x8d99547fee0d3804

Start time: Mon Nov 5 17:08:55 2012

Last election time: Tue Nov 6 10:20:47 2012

A: UP, PRIMARY

B: UP, SUBORDINATE

A: memb state UP, lead state PRIMARY, mgmt services state: UP

B: memb state UP, lead state SUBORDINATE, mgmt services state: UP

heartbeat state PRIMARY_OK

INTERNAL NETWORK INTERFACES:

eth1, UP

eth2, UP

HA READY

Detailed state of the device selected for HA storage:

Chassis 1, serial: FOX1530G861, state: active

Server 1, serial: FCH1525V01T, state: active

Server 8, serial: WZP1615000E, state: active

I hope you found this post as interesting to read as I did to write and again big thanks to Sean, Scott and Fab for their input!

Regards

Colin

Thanks a lot all of you UCS Guru’s. I really enjoy reading through this blog & the insight it provides into this amazing product from Cisco!

Reblogged this on Jonathan Frappier's Blog and commented:

Great overview of HA with Cisco UCS manager

Thanks for spreading the word Jonathan

Nice blog by the way http://jonathanfrappier.wordpress.com/

Regards

Colin

Thanks – love the info you are sharing. I am almost a couple years away from my last UCS deployment but love the platform and trying to keep up with it as much as possible.

Excellent article, simple and to the point!