As you may be aware a major UCS Manager update has been in development for the past 12 Months or so, I have been keeping a keen eye on this as there are several aspects to the new release which I have wanted for a long time.

As some of my blog readers would know, about 8 months ago I wrote a post entitled “UCS the perfect solution?” where I detail my top five gripes or features I would like to see in Cisco UCS Manager. Well with the imminent release of UCSM 2.1 they are now all pretty much crossed off.

This release previously only referred to by the Cisco Internal Code Name “Del Mar” has been allocated the version number 2.1 currently due for general release Q4 this year.

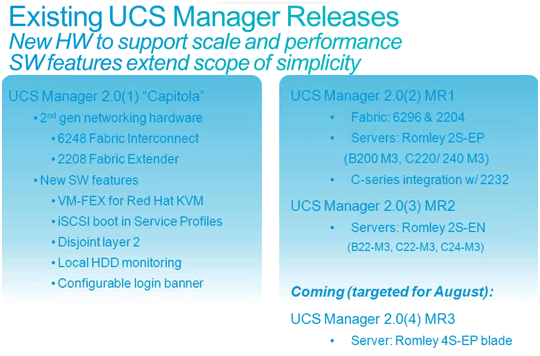

The above shows the maintenance releases for Capitola (UCS Manager 2.0) including the current 2.0(4) release required to support the new B420 M3 4 Socket Intel E5 “Romley” Blade.

I have summarised the key features of Del Mar below and picked out some of the key ones.

1. Multi-Hop FCoE

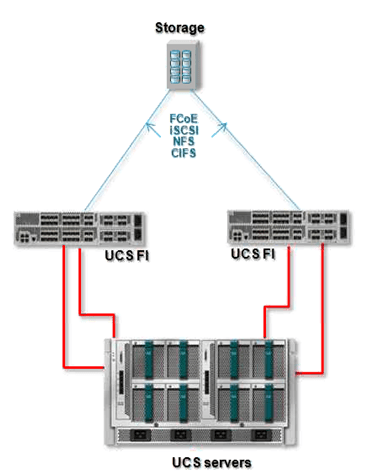

So first off and one of the most eagerly awaited features is full end to end FCoE. This means we will no longer have to split Ethernet and Native Fiber Channel out and the Fabric Interconnect. But have the option of continuing the FCoE connectivity northbound of the FI into a unified FCoE switch like Nexus and beyond or even plug FCoE arrays directly into the FI itself. As shown below.

Main Benefits: further cost reduction in cabling etc.. No dedicated native fiber channel switches required, full I/O convergence in the DC now available.

2. Zoning of Fabric Interconnects

Full Zoning configuration now supported on the FI. Previously the FI could only inherit zone information from a Nexus or MDS switch, with UCSM 2.1 the FI will support full Fiber Channel zoning

Benefits: Fabric Interconnect could now also be used as a fully functional FC switch for smaller deployments negating the requirement for a separate SAN fabric.

3. Unified Appliance Ports.

You will now be able to run both Block and File data over a single converged cable directly into your FCoE Storage array (NetApp will be the only Array supported initially), as shown below

Benefits: Further cost reductions by consolidating ports and cabling, and running both Block and file data over the same cable.

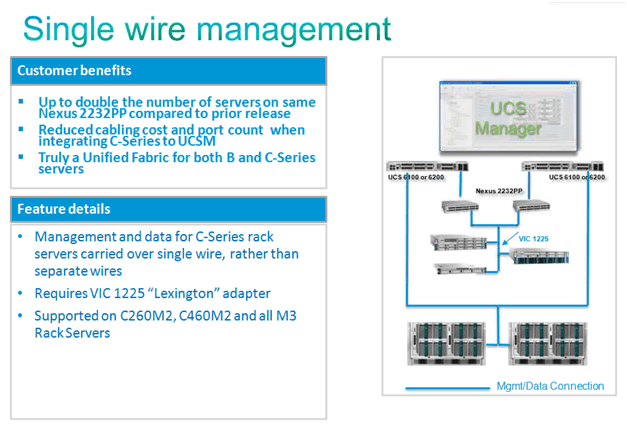

4. Single Wire C series Integration

C series Integration is now where it should be i.e. a single 10Gbs connection to each Fabric by way of a 10Gbs External Fabric Extender (Nexus 2232PP). This single 10Gbs connection to each fabric carries both data and management (in the same way as the B-series blades). Prior to 2.1 you had to cable the C-Series with a separate cable for Data and Management.

In essence you are creating a blown out chassis, with external FEX’s and Compute Nodes.

I’m a great believer in the right tool for the job and not all roads lead to a Blade form factor. So having tight seamless rack mount integration is great. And if for whatever reason you want to move a work load from a blade to a UCSM integrated rack mount, it’s just a few short clicks to accomplish.

(Supported Single Wire platforms C22M3, C24M3, C220M3, C240M3)

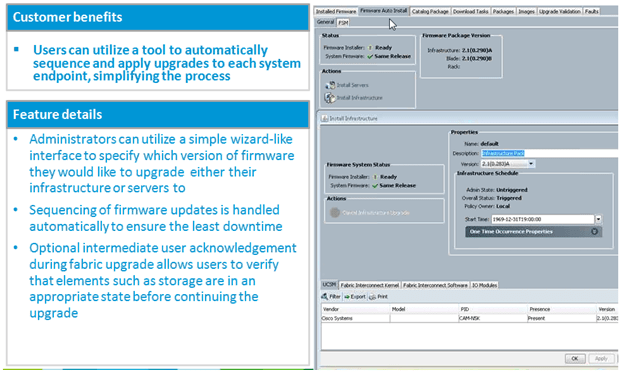

5. Firmware Auto Install

Anyone who has done a UCS infrastructure Firmware upgrade knows it is a bit of a procedure and obviously has to be done in a particular order to prevent unplanned outages. UCSM 2.1 comes with a Firmware Auto Install wizard which automates the upgrade.

The Firmware Auto install below upgraded my entire UCS Infrastructure in 35mins, in the correct order, with only a Fabric Interconnect reboot user acknowledgement required.

Benefits: Should provide a consistent upgrade process and outcome, reduce margin for human errors, speed up upgrade time.

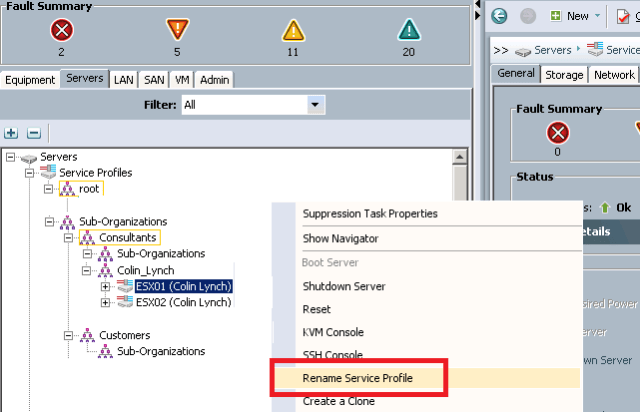

6. Rename Service profiles

Hurray, been waiting for this for a long time.

You will now be able to non disruptively rename Service Profiles,

This puts the power back into using Service Profile Templates, as I found myself cloning SP’s rather than generating batches from Templates, purley because I did not want a generic prefix that I could not change.

Service Profile Templates cannot be renamed, nor will you be able to move service profiles between organisations. But hey that’s no real biggy they are easy enough to clone into a different Org then just change the addresses manually (Pools will update themselves with these manual address assignments)

7. Fault Suppression

I’m sure you have all at some point rebooted a blade or made planned config changes to an SP only to see UCSM display a plethora of errors while the change is being applied. Obviously if this was planned you don’t want your Call Home or Monitoring system to alert on these “Phantom Errors”

Worry Not! you will now be able to put an SP into “Maintenance Mode” and while in Maintenance Mode UCSM will not report any errors for that SP.

Also Existing error conditions that are “expected” will no longer raise faults. Ie. VIF flaps during service profile association/de-association etc.

8. Support for UCS Central

UCS Central previously known under the Cisco Internal code name “Pasadena” is due out later this year. UCS Central will allow full management and pooling of addressing between separate UCS domains. UCS Central will be released in two functional phases. Phase 1: Able to pool and share resources between multiple UCS domains.

Phase 2: Able to move Service Profiles between multiple UCS domains.

See my full post on UCS Central Here.

9. VM-FEX Supported in Hyper-V

VM-FEX will be supported in Microsoft Hyper-V as will Single Root I/O Virtualisation (SRIOV) where the Hypervisor will support dynamic creation of PCI devices on the fly. (Currently this is done via UCSM)

10. VLAN Groups

You will now be able to group VLANs and associate these groups to certain uplinks, (will be a nice feature when using disjoint layer 2)

11. Org Aware VLANs

Another nice feature is that Organisations can now be given permissions to particular VLANs, so in essence Service Profiles can be limited to only being able to use VLANs assigned to the Organisation they are in. In fact when creating a Service Profile the admin only has visibility of the VLANs granted to the Org they are creating the Service Profile in.

Great for multi-tenancy environments as well as reducing the possibility of misconfigurations and enforcing security policy.

Anyway that’s my summary, lots of good stuff coming.

Regards

Colin

Hi, very nice post!!

I have a question. If we have 2 sites, one with Zoning implemented in the Nexus 6284A and B and the second one with 2 MDS switches, could we make an ISL and merge zones among the nexus and the MDS?

We are planning a new install for next month and in this case we would need to buy just 2 MDS instead of 4 of them. I would help to sell the project.

Hi Raul

I certainly don’t see why not, after all even without the 2.1 Code you can connect an FI in FC Switch mode to a Nexus or MDS via an ISL (E_Port) and “inherit” the zone information.

Regards

Colin

You can definitely create an ISL between a Fabric Interconnect in FC switch mode and a FC switch. The only limitation to be aware of when using the new UCSM 2.1 FC Zoning feature is that currently we don’t support being connected to storage switch at the same time. It’s one or the other. The FC zoning feature was added to augment environments where there was no storage switch (such as dev or lab environments).

Regards,

Robert

Hi Robert

Thanks for the clarification an caveat.

Regards

Colin

Hi Colin,

thanks for the answer!! Just a last question 🙂

If connectivity between site I and site II falls down, the inherited zoning still works, doesn’t it?

regards,

Raul

Colin, very nice post here on UCSM 2.1

As I was looking at the related links you have listed for UCS, I wanted to bring your attention to a fairly recent piece of collateral we’ve produced. It’s a nice .pdf summary of specifications for all our UCS products. A more comprehensive and clean-to-print version of our model compare pages, if you will:

Click to access at_a_glance_c45-673174.pdf

It is a bit buried by our content management system on the website. This page that contains this document and similar references:

http://www.cisco.com/en/US/products/ps10280/product_at_a_glance_list.html

I thought your readers might find this portfolio summary useful.

Thanks,

Todd Brannon

UCS Product Marketing at Cisco

Thanks Todd

Scott Hanson had also mailed me a PDF copy of the posters (Thanks Scott) and I agree it’s a great one stop port of call for comparing Cisco UCS Specs.

I’m also a big fan of the Cisco UCS tech specs App, by Vallard Benincosa but the pdf’s are great for a quick comparison.

Thanks again for highlighting it here and posting the link.

I’ll also add the links to the “links” category on the front page.

Regards

Colin

Pingback: James Bond and Data Centers?? - WKSB Solutions

Is there updated API documentation for 2.1, and for UCS Central?

Hi Not that had been released yet but am sure we’ll see one soon, keep an eye on.

http://developer.cisco.com/web/unifiedcomputing

Thats were it will appear first.

Regards

Colin

Hello guys!

I have vPC from both FIs to a pair of N5Ks (5548UP) and of course separate native FC link between each pair of FI and N5K.

With Multihop FCoE feature introduced in 2.1 version – is there any way to get rid of this native FC link and have FCoE northbound (FI to N5K) USING ONE LINK OF THE EXISTING vPC?

Obviously, another way is to add separate ethernet links (between FI and N5K) and make them unified northbound – in which I don’t see any benefits (except to upgrade from 8Gbps FC to 10Gbps FCoE).

Thank you for any comment.

Regards,

Pavel

Hi Pavel

Great question.

And as far as I am aware running FCoE VLANs over a vPC is still not supported due to the fact that the vPC will connect into both Fabric A and B.

You can of course use Ethernet (single links or port-channels) to carry the FCoE VLANs but these should be only connected to the appropriate Fabric. (Just like you would do in a native Fibre channel topology)

Regards

Colin

Hey guys,

If I still have to run separate fcoe links and ethernet link from 6248’s to 5548 as shown, what does this buy me that running same ethernet link and native fc link doesn’t

Also I use the configuration above can I then pulled the FC off the 5548′s. We don’t have the FCOE cards for the VNX.

Hi Woody

Certainly the BIG win with FCoE is at the edge, not having to buy HBA’s and hugley decreasing SAN Edge port count and reducing the cabling to the hosts is where most of the benefit is felt.

There are still benefits in carrying on the FCoE north bound of the FI, i.e. higher speed, standardising SFP’s, not having to dedicate a block of ports of the FI for native fc, harnessing Ethernet Standards like LACP etc.. But none of these reasons on there own are really compelling to me.

In my view the end goal will be full FCoE convergence running over the primary ethernet links (even if using vPC) I think at present that maybe a harder pill to swallow for the “Die Hard” Storage people who insist in having two physically seperate Fabrics and who are still not convinced Ethernet is a viable and proven transport for fc.

But I remember having the same conversations with Voice people 15 years ago, hearing things like “You can never run our voice over an IP Network with the same level of service as using our seperate voice network” And guess what, now VOIP is the norm and younger Voice people can’t believe you used to have to run it on a seperate network, and so will be the case with FCoE I’m sure.

I think we are pretty much there now, my big debates with SAN People addressing their concerns with FCoE have really tailed off now, as most of them just “get it” these days.

So we are at the stage where running 2 seperate logical SAN Fabrics over a resilient Ethernet network is accepted, But I’m sure it won’t be to long before just running a single Large fully redundant SAN over FCoE from the Array to the Host over a fully redundant Ethernet network is the “Norm” even if using vPC

Obviously if you do not use vPC you can have all of this now, by just using seperate links or seperate port-channels from each FI to the upstream SAN switches as then you can map the FCoE VLAN to only those port-channels / links that go to the correct Fabric.

I have shown both setups below (The Setup with vPC is one of my current Lab setups)

Multihop FCoE with vPC

Data VLANs over Ethernet vPC, FCoE VLANs over FCoE Port-Channel

Multihop FCoE without vPC

Data VLANs over both Port-Channels, FCoE VLANs over port-channel to local Fabric

Hope that clarifies things for you

Regards

Colin

NB) If your not aleady I suggest you follow @drjmetz as he is “the man” for FcoE

Regards

Colin

Hi Colin,

I was with you up until the last diagram. If I read it correctly (which of course, there’s no guarantee that I do 😉 You have cross-connected the SAN A/B topologies between the FIs and N5ks. In reality you wouldn’t have FIa connecting to N5kb with FCoE like that. This is true even with shared links.

As of this writing, the first topology you show is supported, but the second is not (again, assuming that I understand your diagram correctly).

Hope that helps,

J

Thanks J

I’ve made the diagram a bit clearer and labelled the cross connection port-channels (these do not carry the FCoE VLANs)

The only FI Port-channels that carry the FCoE VLANs are the ones between FI A and N5KA and FI B and N5KB.

Have also ammended the colour code of the cross connect port-channels, as they do not carry the FCoE VLANs they are just carrying Ethernet Data VLANs.

This example shows how you can carry fc Storage and Ethernet data VLANs without having to dedicate links purley for the storage traffic as in the vPC example.

That I much prefer and recommend, seperating out the storage links and use the top vPC topology.

Great to get “The Man” on FCoE input thanks again.

Regards

Colin

Guys thanks for the diagrams and information

Hi Colin,

Great post. I was really looking forward to the main points on UCSM 2.1.

Any word yet on when the 6200 FIs will be layer 3 ready?

Regards,

Arthur

Hi Arthur

Thanks, no set date for L3 as far as I have heard as yet.

Regards

Colin

I’m not really sure of the use case for L3 on the FI. The FI is meant to act as an End Host. The same reasons we haven’t seen much of a need for routing at the hypervisor layer applies to UCS. Since the Nexus 5500 supports the L3 daughter card it will likely trickle down to UCS, but as Colin mentioned, there are no tentative plans as of yet.

Thanks Robert

Agreed! having L3 on the FI’s certainly hasn’t been high or even on my, or any my customers want list either.

Hi Colin,

great diagrams about FCoE in vPC environment … makes understanding very clear.

Now I have another problem, running software version 2.1.1a. Is there any way to create KVM user role only and WITHOUT read-only access to management ? Role service-profile-ext-access works for kvm, but user has also RO access to all management, which is undesirable.

I heard, that this >should< work in 2.1, bud didn't find any solution.

Thanks

Regards,

Pavel

Pingback: Cisco UCS Direct-Attached SAN | Andrew Travis's Blog

Colin – Very nice post. I want to ensure I understand your second diagram properly. With the FCoE Port-Channel and the Ethernet Port Channel, are you setting PIN Groups to direct traffic or are you allowing the FI’s handle the direction of traffic?

Also – What would you recommend for QoS COS settings for this type of topology?

Hi Richard

No Pin groups required, I would just add the relevant FCoE VLAN to the relevant port-channel via LAN uplinks manager.

But again would prefer using seperate links whether FCoE or Native FC for Block storage protocols.

As for the CoS for the FCoE Traffic the DCB standard already takes care of that, by adding an Ethertype of 8906h to any fc traffic exiting the Cisco CNA / VIC. And places it on “lane” 3 of the 8 CoS lanes defined in the 802.1Qbb standard (A Sub standard within DCB)

Lane 3 is defined by default as a “non drop” class which uses a “pause mechanism” to replace the non drop “buffer credit” method used in the ANSI standard. Lane 3 also has a default weight of 50% (5Gb) of the link bandwidth. (It can use all the bandwith if there is available capacity though)

The below diagram should make things a bit clearer.

Regards

Colin

Using VLAN groups, Firmware auto-update, and it is nice to rename service profiles. I tend to just increment the service profiles and use labels to differentiate. One bug in the code I noticed is if you rename a service profile before associating with a blade, it will show an error forever. GREAT POST.

Hi, Colin – If I am looking at this right, I can us PIN groups to control vLANs to go over a certain north bound connection right?

I have 2 VPC (4 ports) groups going to our main network and 2 ports going to a diffrent switch (DMZ) I want to make sure that traffic does not cross.

Thanks,

Chris.

Hi Chris

Thats not the idea of Pin Groups. A Pin Group is simply to influence exactly which uplink port a specific vNIC (Not VLAN) gets Pinned (Mapped to)

The only real case to use Pin Groups is to perhaps dedicate a single link or port channel to a specific High bandwidth workload (Oracle Perhaps)

The process you want is much easier, and referred to by Cisco as “Connecting to Dis-joint layer 2 Domains” meaning seperate networks with non overlapping VLAN ID’s

All you need to do is go into “LAN Uplinks Manager” and select the VLANs you want on each uplink (by default all VLANs exist on all Uplinks, thus assume all upstream switches have a L2 adjacentcy, which is not true in yoru case)

The below chapter covers your requirement.

http://www.cisco.com/en/US/docs/unified_computing/ucs/sw/gui/config/guide/2.0/b_UCSM_GUI_Configuration_Guide_2_0_chapter_010101.html

Regards

Colin

Hello Colin,

In your first picture discussing multi-hop FCoE you show an FCoE array directly attached to the fabric interconnects. Does this require the 6248 and unified appliance ports?

Thanks, John.

Hi John

For direct attach FCoE storage the ports can be configured as FCoE Storage Ports (in fc switch mode) valid on all FI’s 6100/6200 with at least 1.4 code. As you mention you could also configure the ports as Unified Storage Port (the combination of a FCoE Storage port and an Appliance port.

The below link gives a good overview with regards to direct attach storage using NetApp arrays.

http://www.cisco.com/en/US/prod/collateral/ps10265/ps10276/whitepaper_c11-702584.html

Regards

Colin

Thanks Colin. That article implies that we still need an external FC switch for creating and managing zones as this can’t be done on the fabric interconnects. Is that still the case?

Thanks, John.

As of the latest version 2.1 – you can create local zones on the Fabric Interconnects while running in FC Switch Mode. We require that you do not have any upstream SAN switches connected for this topology to be supported. (One or the other, local zones, or upstream SAN switch to push them).

http://www.cisco.com/en/US/docs/unified_computing/ucs/sw/gui/config/guide/2.1/b_UCSM_GUI_Configuration_Guide_2_1_chapter_011011.html

Regards,

Robert

Thanks for picking this one up Robert.

Certainly another nice update for Direct Attach Environments

Colin

Hello there Colin, great post. I was interested in the bit of the Auto Install. We are currently running version 2.0(2q) and I was wondering if you knew of any “gotchas” that I would have to be worried about when doing the update to 2.1(1e)?

Hi Kris

So far in the 20 or so upgrades I have done using FW Auto Install, all have went well and I have had no issues.

(I did get the odd UCSM hang when upgrading manually to 2.0)

If you do your UCSM version first, you can then upgrade the rest of the UCS Infrastructure using Auto Install.

Then just upgrade your blades via Host FW Policies as and when you can free them up for a reboot.

If your Service Profiles are bound to an updating template, rather than associate the Host FW Policy to the Updating template, (which would attempt to upgrade and reboot all of the Service Profiles bound to that Updating template, or put them all in a “Pending Reboot” State if you have wisely changed the default Maintainance Policy to “User Ack”) I would Maintaince Mode and shutdown the blade (If using VMware, or just shut the server down if another OS) Unbind the Service Profile from the template, associate the Host FW Package to the now unbound SP then bind it back again (assuming you have no Host FW Policy assosiated to the Updaing Template, if you do just remove it) Once all of the Blades have been upgraded you can then if you wish associate the 2.1 Host FW Policy to the Updating Template to ensure all future SP’s created from it are auto upgraded.

Good luck, you’ll be fine!

Colin

Thanks for the information Colin. I did end up finding a great tutorial on Cisco’s site that includes some videos. It does leave some stuff out though that you see when performing the task but not in the video. ( http://www.cisco.com/en/US/products/ps10281/prod_installation_guides_list.html ) I do have one more question though. We are running ESXi 5.0.0 (914586) and I heard mention from another VM Ware Guru that we had to also perform the VM Ware driver install for the fNIC and eNIC through a vCLI for the host servers. I was wanting to know if this was something that we could take care of through update manager instead of trying to do it manually on each server host. I understand that this might be more of a VM Ware question however the drivers package is from Cisco for the UCS environment so I thought I would give it a shot. Also if it is something up your ally do you know of any good powershell scripts that this could be scripted into for the install. The document from Cisco that references this is titled “b_Cisco_VIC_Drivers_for_ESX_Installation_Guide”. I thank you kindly in advance for your assistance.

Kris.

Dear Guru,

If I upgrade UCS Ecosystem (FI, Blade Server & etc) to 2.1(1e) the latest firmware version do I need to upgrade the MDS 9148 firmware (5.0 (4c)), Nexus 7010 Firmware and Nexus 1010 Hardware appliance firmware?

Thank you.

Hi Jia

In short no, it is always worth checking the Release notes for the USCM Version you are thinking of upgrading to, to check the open and resolved Caveats. Aswell as the Cisco UCS Interoperbility Matrix for your UCSM Version.

http://www.cisco.com/en/US/products/ps10477/prod_technical_reference_list.html

All that said if your environment is under a support contract you may need to adhere to your providers support matrix, I know EMC for example have there own Support Matrix.

Regards

Colin

Hello Colin,

I am mapping out an upgrade from 2.0 to 2.1 and have a direct connected array using the ‘default zoning’ option in 2.0 (no longer supported in 2.1). It sounds like default zoning will disappear at some point in the upgrade process – which is obviously an issue. I’m wondering if anyone has performed the upgrade and can provide some guidance on how to go about it non-disruptively.

My assumption would be to perform the upgrade steps in the usual order, but to pause and create the proper zoning on the secondary FI after the upgrade/reboot, confirm data paths on the FI, then carry on. I’d love to have someone confirm that this works. Otherwise, I will need to coordinate a massive outage and have a nice long conversation with my Director about how I convinced him to buy UCS because of it’s enterprise class redundant configuration and explain why I now have to take all the blades offline to upgrade. 😉

Thank you in advance for your insight!

Brent

Hi Brent

I have never done this personally but will see if there is a guide on it, there maybe mention in the 2.0 to 2.1 upgrade guide. (I am updating while on holiday from very limited mobile devices at present 😦 )

I would be very surprised if Cisco or TAC do not have a detailed procedure for this, so please wait until I can check or open a case with them. Sounds like a manual upgrade would be better than a Firmware Auto upgrade just so you can be clinically sure of every step.

Your theory’s sounds good about updating a single fabric, zoning, confirm paths then upgrade the other Fabric. You will only have this zoning feature once UCS Manager has been upgraded to 2.1. So you upgrade would go something like.

1 UCSM

2 IOM A (Startup Only)

3 FI on Fabric A

4 IOM B (Startup Only)

5 FI on Fabric B

But just to be sure, please check with TAC before proceeding.

In the meantime if I confirm the procedure I’ll update this thread.

Regards

Colin

Thank you for the comments Colin. TAC was able to test this upgrade in a lab and determined that default zoning is disabled when the UCS Manager software is updated (well before the FW is upgraded on the FI’s). As such, storage connectivity would be immediately interrupted on both fabrics which would cause an extremely bad situation for running virtual machines.

One potential work-around that was suggested was to put in a temporary MDS switch and use that for the zoning DB temporarily. For our situation, we are planning to take a short outage as the temporary switch option was not completely embraced by TAC. I think the response was: “yeah, that might work”…which wasn’t quite enough for me to hang my hat on! 😉

Again, thank you for the insight. After some careful consideration, we have decided that a short planned outage is a better option than introducing this level of risk.

-Brent

Thanks for that Brent

Some useful info for anyone looking to do the same.

Regards

Colin

Hi Colins,

Im using multihop FCoE with UCS manager version 2.1(b). But still have warning on each Fabric Interconnect A and B. Warning Code F1083, Description FCoE uplink is down on Vsan 1. Is it bug? Because i have no idea how to clear that.

Thanks.

Hi Mike

I am aware of a bug in 2.1 (CSCud81857) Where you get that error if the VSAN is not configured upstream (Even if you are not using it i.e. error reporting on VSAN 1 if you are using a different VSAN ID ). I have just done a quick check and the current state of that bug is (No Work around, error is cosmetic).

I would suggest you log a TAC case, to confirm that is indeed the bug you are running into and whether there is a fix as yet. Please report back how you get on.

Regards

Colin

I am getting lot of INFO from this discussion still i am wondering why to buy MDS switch for Zoning if i get same thing in Fabric interconnect .Could you please help me to understand in a real production environment what would be the challenges to run the zoning completely in fabric interconnect ?

Hi Bikash

It’s quite a simple decision really if you have a reletivley small FC requirement and the only Servers that will be using the SAN are the UCS Servers behind the Fabric Interconnects, then it doesn’t really seem cost effective having to buy 2 x seperate SAN switches just to provide the SAN connectivity.

Makes sense with a small DC in a rack intergrated system like a FlexPod / vBlock if you are trying to keep costs to a minimum and we may well see Direct attach options on a entry level FlexPod or Vblock in the near future.

But for any Enterprise Class implementation I would always look to provide seperate SAN switches or use a Unfied Nexus 5500 to provide the FC/FCoE functionality.

Regards

Colin

bit annoyed that they disabled default zoning on SANs; worse yet they left in there CLI manual for the 2.1 release.

Typically you don’t disable a feature…

Hi Jason, I think it is true and annoying. Now you can’t create a VSAN in order to connect external initiators (a rack server) to connect to the storage.

I would like to know if I’m right.

Also we expected another ways to configure zoning. It would be nice to use Fabric Manager or any other tool to make zoning.

Anyone else notice a bug with flogi database? Could not get any zoning to show up unless you mounted a virtual cdrom or installed a image.

The fabric manager can do full local zoning; but you need to set the interconnects to switch mode; instead of end host mode.

Pingback: UCS Guru has great write up on UCS Manager 2.1 – Real World UCS