Search UCSguru.com

-

Recent Posts

Categories

Helpful UCS Links

- UCS Gui APP for Android Phone

- Manage your UCS from your iPhone

- Cisco UCS Advantage Videos

- B Series Model Comparison

- C Series Model Comparison

- Configuration Examples and TechNotes.

- UCS Firmware Downloads

- UCS Visio Stencils

- Cisco HyperFlex LinkedIn Group

- Cisco UCS LinkedIn Group

- Cisco UCS Community

- Cisco Partner Datacenter Community

- UCS Power Calculator

- Cisco UCS Layout Tool

Blogroll

What Clothes is your Network wearing?

Thought of the Day

Every so often a conversation crops up in our house that immediately sparks thoughts of a network analogy in my mind, or how every day occurrences keep you grounded and your ego in check, like ‘What a leveller pets are, yesterday I was presenting hyper-convergence to a large global bank and today I’m cleaning cat sh!t off a carpet’ you know thoughts like that.

Well today I had another such thought, like most people I’m a busy person, my general routine is up at 05:45, and hour in the gym come back, walk the dog, shower, change for work, and be in my home office just before 09:00. Well that’s the ideal. The reality is often a little different, with ingredients like getting dressed in the dark, running a bit late at the gym etc.. Now as you can imagine depending on where I am in the above cycle, my appearance can, at best be described as a little odd. But this morning me and my 16 yr. old daughter crossed paths in the hallway as I was about to walk the dog, and I was greeted with the words ‘Oh my god dad, what do you look like, your not going out like that are you!’ at which point I did have to check myself in the mirror to realise that I was in shorts, polyester green socks (probably a Christmas present from years ago, just because they are really quick to slide on) a T shirt with the slogan ‘I run because I really like Beer’ one slider and one welly (which I was halfway through changing into) Apologies for that mental picture for anyone still eating breakfast. The fact is I looked like I had been dragged through a charity shop backwards.

But then I got to thinking I probably look like many of the Enterprise Networks I see, very rarely does any client have the luxury of buying all their infrastructure at the same time and ensuring all their security, tooling, observability, NOC / SOC are all holistically designed to seamlessly work in harmony with each other. That may have been the case once, if you were luckily enough to have started greenfield, but different hardware, software refresh cycles, domain silo’s, OEM politics, even whichever vendor a CEO was playing golf with that week all effect decisions. And before too long sadly your network is looking like that mental picture now sadly burned into your brain.

The problem isn’t aesthetics. The problem is that complexity quietly taxes every decision, slowing change, increasing risk, and turning strategic choice into compromise.

Well what’s the answer? or at least ‘an answer’ there are obviously assessment services you can undertake, have holistic reviews to see where gaps and overlaps are, But again the reality is, it’s first best to define what you want to look like, have a clear picture of what you want your final look to be. That then becomes your ‘North Star‘ that you are working towards, it maybe a 3 year or 5 year plan and you actually never reach it as it’s a rolling window, and that NorthStar will evolve as technology evolves. And then every decision you make from then on is guided by that NorthStar. no need for ‘rip and replace’ you just need a ‘mirror’ and the discipline to stop buying clothes that don’t fit the outfit they are trying to become.

This isn’t an architecture exercise, it’s a leadership one, clarity of intent matters more than technical perfection.

So perhaps worth taking a step back having a good look, and ask yourself, what clothes is your network wearing?

Have a good weekend!

Where’s the real cost consideration in your AI back‑end Network?

AI Thought of the Day

Let’s talk about a myth I still hear far too often:

“The network cost is generally about 10–15% of an AI build, and the difference in cost between an Infiniband and Ethernet backend fabric isn’t compelling enough for me to ‘risk’ going Ethernet.

Here’s the reality, and let’s keep the maths simple.

If you’re buying a £100M AI platform, the network might be ‘only’ be £10M of that. And the cost delta between an InfiniBand back‑end and an Ethernet back‑end might only be a few percent of that £10M.

So will a serious AI customer really ‘risk’ the performance of their entire platform just to save a few quid on the scale-out / backend fabric?

I’ve never heard a large-scale AI buyer say:

“Let’s risk GPU idle time on a £500M supercomputer to save £5M on the network.”

Because the real financial equation isn’t about the network CAPEX at all.

The real cost driver is this:

Even low single-digit drops in GPU utilisation costs more (often far more) than the entire network savings.

If your GPUs are waiting around because your network is congested, mis‑tuned, or simply not engineered for scale‑out AI behaviour, that’s not a technical issue.

That’s a material financial loss, examples being:

- Lost revenue (GPUaaS)

- Delayed training results (slow product cycles)

- Energy + cooling (real OPEX burn)

- Total cost per token

- Stranded capital lowering ROI

All of these dwarf any saving made by choosing a ‘cheaper network’.

It doesn’t matter how many of the world’s fastest GPUs you buy,

if the network can’t get data to them fast enough, you may as well switch them off.

Don’t get me wrong, I’m a techy and I like nothing more than debating the intricacies of PFC and SHARP, ECMP vs Packet Spraying etc..

But the fact is, at board level the network selection has quietly shifted from a “technical architecture choice” to a core business and AI ROI decision.

And as AI environments grow into the tens of thousands of GPUs, the stakes only get bigger.

For now at least InfiniBand still generally leads to the lowest GPU idle time, although Ethernet with RoCEv2 can get close and NVIDIA’s Spectrum-X even closer, but as my old dad used to say, ‘close only counts, in horseshoes and hand grenades’ so if your main consideration is GPU idle-time InfiniBand is still generally the best choice, plus in my experience depending on vendor RoCEv2 can add configuration complexity and can lead to performance issues if not optimally configured thus increasing operational risk.

And on top of the above with supply and demand being as it is you now, you increasingly also need to take into account lead times and device availability, i.e. do you want Ethernet TODAY, or can you wait 6 months for an InfiniBand backend? Or vice / versa.

And in my experience depending on the type of client, the best choice for each of them may be different anyway. i.e. a Formula One team (think neoscaler / neocloud) want that perfectly tuned and optimised race car with zero compromises and can afford and can justify having a team of technical specialists for every component of the car..

But as you drop down into the lower leagues Formula 2, 3, 4 and so on (think Enterprise), this no longer holds true, suddenly other factors come into play like, operational simplicity, cost vs return, familiarity of architecture, abundance of skills in the market, ease of integrating into existing operating models etc.. and in that case ‘GOOD maybe good enough’. The term that has often led to Ethernet displacing other more proprietary technologies.

The reality of course is, this is rarely a ‘cut and dry’ situation but more a sliding scale, and what is best for you should be given careful consideration

In a future post, I’ll break down the technical and commercial trade-offs between InfiniBand vs Ethernet on the AI back-end, and how Ultra Ethernet could reshape the landscape entirely by turning that old, reliable and trusty ‘Ethernet Honda Civic’ into something more akin to that Formula One car!

My own perspective and experience — AI simply performed the CRC check.

Posted in Artificial Intelligence

Tagged ai, GPU, Idle Time, infiniband, roce, rocev2, ROI, uec, ultra ethernet

Leave a comment

What’s next for AI Infrastructure?

Don’t worry if some of the terms below are new to you, I have linked my glossary here

Over the last year or so I’ve had so many meetings, read so many papers, watched hundreds of hours of educational videos, on where AI technology is going, in particular the technology that underpins AI functionality and infrastructure, so I thought I should really try and condense all this information into a high level post.

The Power Problem

Without doubt the biggest barrier to future AI evolution is power, both in terms of power required for the various operations, and moving data between chips, but also the physics involved in moving electrons across copper wires on silicon dies, not just within a GPU domain but between GPU domains comprising of clusters of thousands of GPU’s. So as the old adage goes, we need to do ‘more with the same, or ‘do the same with less’ and very smart people like those at Imec and TSMC are looking at both options, but what if eventually we could do a ‘double whammy’ of doing ‘more with less‘ at least when it comes to AI efficiency.

So lets have a very high level view of where we are, where we are going both short and longer term and the various trends driving it. Any one of the technologies I mention below could easily be the subject of a dedicated post in their own right, and may well be in the future, as I find this subject fascinating.

Trend 1 – Squeezing Silicon Harder

So when it comes to doing ‘more with the same’ or should I say ‘Moore with the same’ 🙂 we are approaching the practical limits of how many transistors we can fit on a die/chip cut from a silicon wafer, yes I know they’ve been saying this for years, but now I’m starting to believe them, for example NVIDIA’s Blackwell GPU has 208 billion transistors on a 4nm process. With the Rubin and Rubin Ultra GPUs on a 3nm process planed for release in 2026/7. Worth noting the ‘nm’ figure commonly quoted with chips no longer equates to any single physical measurement such as gate size, instead it should be understood as a ‘generational label’ indicating a new process node technology.

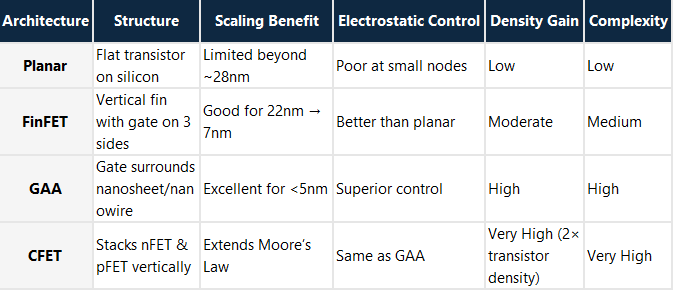

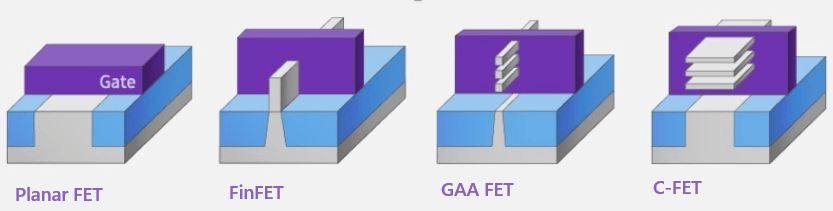

Beyond this, transistor optimisation technologies like FinFET then Gate-All-Around (GAA) provides optimisations in transistor density and lessens voltage leakage across the gates by surrounding the channel on all sides.

And as all real estate professionals know when you’ve built as much as you can on a piece of land and you’ve used up all your X & Y axis space, the solution is to start building up and scale into the vertical plane, CFET does exactly that by allowing stacking of transistor polarities (nFET & pFET) on top of each other rather than side by side as in GAA, thus essentially doubling the transistor capacity on a die, by adding this ‘2nd floor’. As of Q4 2025 CFET is still in early prototyping phase.

The table and diagram below compares the evolution from Planar → FinFET → GAA → CFET

Trend 2 – Beyond Electrons: The Photonic Future

So that buys a bit more time, but then the bottleneck and limitations start becoming the actual materials themselves, the silicon and the copper wires that the electrons flow along, in the words of Scotty from Star trek ‘Ye cannae change the laws of physics’ so the very materials that are used need to be re-evaluated to maintain the momentum of progression.

Once electrons over copper become power‑limited, the only path left is moving data using photons (light). Photonics eliminates resistive heat, delivers higher reach, and radically reduces power per bit.

So silicon possibly makes way for alternate materials like Graphene, and electrons over copper make way for photonics as the physics of electricity with regards to speed, distance and heat generation start to break down in favour of the properties of light. Manufactures like NVIDIA are already adding photonic capabilities into chips like the Rubin ultra GPU (due for release in 2027) by incorporating Co-Packaged Optic (CPO) modules adjacent to the GPUs.

NVIDIA has also introduced Quantum‑X and Spectrum‑X silicon photonic networking switches, leveraging CPO to overcome the scaling limits of traditional electrical signalling and optical transceivers in large‑scale AI deployments.

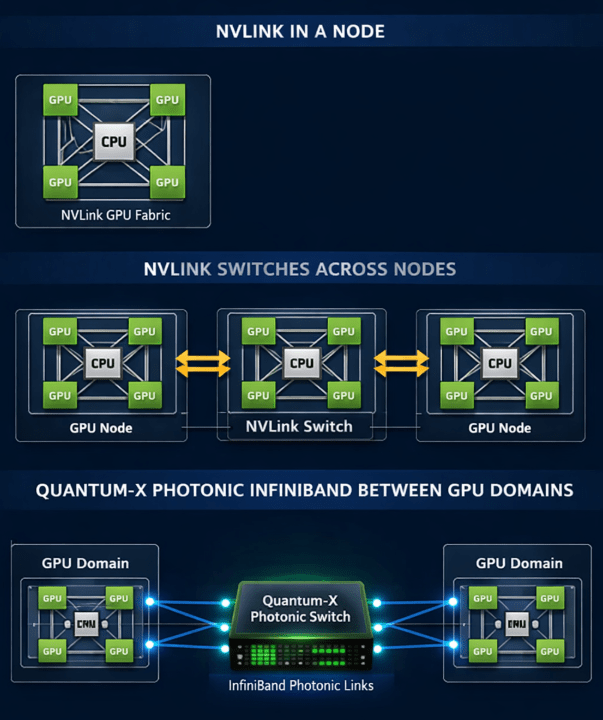

So what role do these photonic switches play? Well lets have a quick review of how GPUs interact with each other. At the node level, NVLink provides a high bandwidth, low latency mesh that connects GPUs (and CPUs) inside a single system, enabling very fast peer‑to‑peer communication and collective operations.

For systems designed for a distributed NVLink domain like, NVIDIAs NVLxxx systems, NVLink Switches extend this fabric to interconnect GPUs across multiple nodes in a rack to ‘Scale up‘ the GPU domain, creating a single, unified ‘GPU domain’ with coherent, all‑to‑all connectivity, allowing massive configurations like NVIDIA’s NVL576 where 576 Rubin ultra GPUs behave as one giant accelerator domain in a single Kyber rack.

Beyond rack scale, NVIDIA’s Quantum‑X Photonic InfiniBand switches provide photonic enabled ‘scale‑out‘ networking that links these NVLink GPU domains together across multiple racks. By integrating silicon photonics directly into the switch ASIC with CPO, Quantum-X reduces power consumption, improves signal integrity, and enables orders‑of‑magnitude scalability for multi‑rack AI fabrics compared with traditional electrical or pluggable optical approaches.

Likewise, Spectrum‑X Photonic switches bring the same CPO‑based silicon photonics to Ethernet networking, enabling cost and power efficient connectivity for AI “factories” comprised of millions of GPUs. The shift from electrical signalling and discrete optics to co‑packaged photonics is critical as AI clusters grow beyond what copper or classic optics can efficiently support

Trend 3 – Beyond Classical: The Quantum Horizon

And what about the ‘double whammy’ I mentioned above well that obviously requires a paradigm shift in computing which takes the form of Quantum computing which is no longer science fiction as Quantum computers are already with us with chips like ‘Willow’ from Google.

One of my previous blog posts was on possible options of how we can reduce the power requirements of AI, one of which was ‘Ternary computing’ where we use ‘Trits’ instead of ‘bits’ where a ‘bit ‘can have 3 states rather than the binary 2, thus giving 50% more information in every ‘Trit’. But Quantum takes that to the next level where each bit or ‘qubit’ can be a 1 or 0 at the same time.

Now that sounds an impossible concept . but how it was explained to me which kind of made sense, is think of a bit which can have 2 states, like a coin, it can be ‘heads’ or ‘tails’ but if you flip that coin in the air while it is spinning it can be said to be both ‘heads and tails’ or in Quantum terms is in ‘Superposition’ these qubits attach to each other via a process called ‘Entanglement’ which then allow processing to evaluate all possible outcomes simultaneously, as every combination can be evaluated in parallel due to the Superposition of all the qubits. Now it should be said that Quantum isn’t the answer to ‘life the universe and everything’ that, as we all know is 42,, and you are certainly not going to run Windows on it, but it does meet a very specific requirement when it comes to the fundamentals of linear equations like complex addition and multiplication functions, and that is where quantum absolutely leaves traditional compute in the dust.

This has obviously caused concern that encryption methods like RSA and ECC while nigh on impossible to break with conventional computing power, could be broken quite easily with the simultaneous evaluation properties of quantum. The day when all widely used public-key encryption methods could be vulnerable to commercially available quantum computing has been named ‘q day’ and could be as close as 2030 hence why many vendors are now offering post-quantum cryptography PQC solutions.

Quantum infrastructure is very different from the infrastructure we are used to with Silicon and copper , instead using materials like Aluminum (Al) or Niobium (Nb) and look more like chandeliers than computers.

These three trends form a pipeline of innovation: more silicon efficiency today, photonics to scale tomorrow, and quantum for the problems classical compute can’t touch

So the answer to the question at the top of this post ‘what’s next for AI Infrastructure’, is ultimately a combination of them all! with ‘Quantum AI’ for specific workloads which will no doubt compliment more traditional enterprise grade AI , with several pit stops along the way utilising the various other technologies mentioned in this post.

As always exciting times ahead.

If you are exploring how to turn AI into something real, not just hype, I’ll be diving deeper into the various vendor solutions I see, architectures and deployment patterns that are actually working in practice, and yes I’m sure our friend Cisco UCS will be featuring in some of them. So follow along if you want straight talk on what delivers outcomes versus what just sounds impressive. More to come at www.ucsguru.com.

Can thinking in 3’s Solve AI’s Power Problem?

As a network specialist for over 35 years , I can ‘think in binary’ can make quite complex subnet / supernet calculations in my head, from years of looking at IP addresses and immediately identifying whether they are on the same network or need to route etc.. almost seeing the world in green 1’s and 0’s like the character ‘Neo’ in the film ‘The Matrix’

But what if computing wasn’t built on twos at all, but instead a 3 state architecture, where it was no longer just ‘On or Off’ but also a mythical 3rd state between the 2. So rather just 1 or 0, we would have -1, 0, and 1, a Traffic light compared to a light bulb if you like, equating to 3 voltage states low, mid & high. Adding this 3rd state drastically increases processing power while reducing the circuitry and power required for it.

For example we all know a 32 BIT IP address provides 4.29 billion unique combinations of 1’s and 0’s (2^32) but add a 3rd state and now that 32 TRIT IP address provides about 1.85 quadrillion unique addresses (3^32)

But obviously changing the fundamental building block on which practically all technology is based, would be a challenge, hence the much simpler solution of just extending the existing 2 state binary addressing scheme and call it IPv6 in order to overcome IPv4 scale limitations as well as introduce additional features.

So perhaps we have missed the boat with regards to introducing 3 state architecture as the IT standard, but there could well be a time where it makes absolute sense to adopt it within an AI pod to drastically improve performance and reduce power requirements, and after all it’s power which is generally the limiting factor on scaling out and locating AI solutions.

The concept of Ternary computing is nothing new, the Russians built a Ternary computer called ‘Setun’ as a research project back in 1958, but like the Beta Max / Blu-Ray of its time it just didn’t get the adoption against the far more popular and standard ‘VHS’ / Streaming of Binary based systems, despite being superior in many ways.

Another challenge with the 3 state architecture is being able to clearly identify each state, an easy situation in a ‘is it On or is it Off’ world but not so easy with 3 states, factor in noise, signal variables and hardware compatibility and the margin for errors are almost non existent, but with modern isolation materials like Gallium Nitride (GaN) or Gallium Arsenide (GaAs) within the transistors this is now far more efficient.

So in the future it maybe the case that the AI ‘Backend for GPU to GPU communication is Ternary based within the AI Pod, with the conversion to standard Binary signalling done at the Pod edge to maintain global connectivity standards. If you think about it this is pretty similar to how a GPU converts CPU instructions but at a Pod scale level. And if having a different type of backend network that wins out because of superior performance sounds unlikley, just look up InfiniBand 😊

Closing thought, If binary gave us the Internet, could ternary give us sustainable AI?

Posted in Artificial Intelligence

Tagged ai, Artificial Intelligence, nvidia, technology, ternary

2 Comments

Essential AI Terms: Your Comprehensive Glossary

I spend a lot of time explaining AI terms and acronyms in conversations particularly network related terms, so I decided to create a single reference post with the most common ones. If you’re starting your AI journey or just need a quick refresher, I hope this glossary helps you cut through the jargon and understand the essentials.

Generic AI Terms

Chat GPT (Generative Pre-trained Transformers) a popular Chatbot

Chatbot: A computer program that uses AI to understand and respond to human language in a conversational way. i.e. ChatGPT

AI Agent: Can operate without human interaction , and has a specific objective i.e. book a flight, analise data

Hallucination: When an AI Chatbot generates incorrect or fabricated information but that sounds plausible.

Prompt Engineering: Intelligently crafting inputs to guide LLM responses to more accurately meet your intent, i.e. asking ChatGPT something in such a way that gives you exactly the response you were looking for.

Agentic AI: Advanced form of AI focused on autonomous decision making and goal driven behaviour with limited human intervention. Uses AI agents that can plan, adapt and execute tasks in dynamic environments.

Physical AI: AI that enables machines and robots to perceive, understand and interact with the physical world.

FP – Floating Points i.e. FP4, FP16 up to FP 32 the amount of bits used to store a large number, the more bits the higher the precision but slower, the sweet spot is generally FP16 , still accurate but faster training time. FP8 Superfast, is used in inferencing.

Token: A small piece of text, usually a word or part of a word or punctuation i.e. the phase ‘AI is amazing.’ is 4 ‘tokens’ “AI”, “is”, “amazing”, “.” AI coverts all inputs and outputs to ‘tokens’ the number of ‘Tokens’ determines how much text you can process and how much it costs. Tokens are then converted to ‘Vectors’ to associate a numeric meaning and context to the string of tokens, which are then stored in the vector database for similarity search.

Embedding: A numerical representation of meaning, concept, or intent learned by an AI model.

For example, the words “coffee” and “tea” produce similar embeddings because they have similar semantic meaning.

An embedding is expressed as a vector, a list of numerical values, where each number captures some aspect of the underlying meaning. These vectors are stored in a vector database to enable semantic search and retrieval.

Vector Database: A vector database is a type of database used in AI that stores data as vectors (lists of numbers). Instead of searching for exact matches, it enables similarity search, finding items that are “close” in meaning or features. For example, if you like Product A, the system may suggest Product B because their vectors share several similarities.

Context Window: The maximum number of tokens an LLM can process at once. An example being the ‘memory within a chat window’ of what information you have previously given.

Inferencing: Using a pre-trained AI model to generate predictions or responses based solely on its training data.

Key Point: No external updates; the model relies on internal knowledge.

Analogy: Answering a question from memory.

RAG: Retrieval Augmented Generation.

An AI technique that combines a language model with real-time data retrieval from external sources before generating an answer.

Key Point: Adds fresh, domain-specific context to overcome model knowledge cutoff.

Analogy: Checking a reference book before answering.

LLMs Large language models: AI programs trained on massive datasets to understand and generate human language.

LRM: Large Reasoning Model, A form of LLM that has undergone reasoning focused fine tuning, to handle multi-step reasoning tasks. Instead of just predicting the next word (like traditional LLMs)

Fine Tuning – Is the process of taking a pre‑trained AI model and adjusting it with new, specific data so it performs better on a particular task. Like teaching an AI model that already knows a lot of general stuff to specialise in your subject.

MCP: Model Context Protocol: MCP is a specialised protocol designed for AI models. It standardises how models connect to external tools and data sources, making it easier to provide context and structured results. Unlike APIs, which vary by service, MCP acts as a universal adapter for AI systems, ensuring consistent integration across different environments

MoE: Mixture of Experts: An AI model design where multiple specialised “expert” networks are trained for different tasks or patterns, and a gating mechanism decides which expert(s) to activate for each input. This makes the model more efficient, since only a few experts are used at a time instead of the whole network.

All‑reduce: A communication step used in multi‑GPU training where each GPU shares its results, and they are combined into one final value that every GPU receives. This keeps all GPUs in sync so they update the model the same way during distributed training

Infrastructure , Hardware

CPO: Co-Packaged Optics modules use silicon photonics to integrate optical components directly alongside high-speed electronic chips, like switch ASICs or AI accelerators, within a single package

Hyperscaler – Large Cloud Provider i.e. AWS, Azure, GCP

Neoscaler/Neocloud – Typically smaller more agile than a Hyperscaler Emerging providers focused on specialised AI infrastructure at scale i.e CoreWeave, Lambda Labs. Commonly offering GPUaaS offerings.

Offtaker: The downstream client actually booking and paying for the recourses from the GPUaaS provider.

GPU: Graphics Processing Unit is a specialised processor originally designed for rendering graphics, now widely used in AI for its ability to perform thousands of parallel computations simultaneously. This architecture makes GPUs ideal for accelerating deep learning tasks such as training neural networks and running inference, dramatically reducing processing time compared to traditional CPUs

DPU: Data Processing Unit: is a specialised processor designed to offload and accelerate data-centric tasks such as networking, storage, and security from the CPU. DPUs typically combine programmable cores, high-speed network interfaces, and hardware accelerators to handle packet processing, encryption, and virtualisation, improving performance and freeing up CPU resources for application workloads. (e.g., NVIDIA BlueField).

TPU: Tensor Processing Unit, Purpose built chip by Google for AI and ML acceleration, significant speed and efficiency gains over GPU or CPU based systems, but not as flexible.as optimised for a narrower set of operations.

NPU (Native Processing Unit) is an emerging class of processor that uses photonic (light-based) technology instead of traditional electronic signalling to perform computations. By leveraging photons, NPUs achieve ultra-high bandwidth, dramatically lower power consumption, and minimal heat generation, making them ideal for accelerating AI workloads and large-scale data processing.

RDMA (Remote Direct Memory Access): Enables direct memory access between nodes without CPU involvement.

InfiniBand: A high‑performance, low‑latency networking technology widely used in supercomputing and AI training clusters. It supports advanced features such as RDMA (Remote Direct Memory Access) and collective offload, enabling efficient data movement at scale. While InfiniBand is an open standard maintained by the InfiniBand Trade Association (IBTA), in practice the ecosystem is heavily dominated by NVIDIA, following its acquisition of Mellanox in 2020..

ROCEv2 A protocol that enables RDMA over Ethernet using IP headers for routability. It requires lossless / Converged Ethernet (PFC/DCQCN) and is common in HPC and storage networks

Ultra Ethernet: An open standard designed to evolve Ethernet for AI and HPC workloads, focusing on low latency, congestion control, and scalability. It aims to provide an Ethernet-based alternative to InfiniBand for GPU clusters

NVLink – NVIDIA’s High-speed GPU interconnect usually within a node

NVSwitch – An advanced switch fabric that connects multiple NVLink-enabled GPUs inside large servers (e.g., DGX systems). Connects up to 72 GPUs (NVIDIAs NL72 rackscale system)

DGX GB200: This system uses the NVIDIA GB200 Superchip, which combines the Grace CPU with Blackwell (B200) GPUs

DGX GB300: This system is based on the enhanced NVIDIA GB300 Superchip, featuring the Grace CPU with the Blackwell Ultra (B300) GPU

NVIDIA NVL4 Board – (GB200 Grace Blackwell ‘SuperChip’ 4 x Blackwell GPUs with 768 GB HBM3E memory, 2 Grace CPUs with 960 GB LPDDR5X memory connected via NVlink to perform like a single unified processor . Designed for hyperscale AI with trillions of parameters.

NVIDIA NVL72 -Essentially 18 NVL4 boards in a single rack connected via NVLink, with 72 Blackwell GPUs, 36 Grace CPUs sharing 13.8 TB of HBM3E and LPDDR5X memory and acting like a single giant GPU pool, definitely more than the sum of it’s parts. Liquid cooled and can draw ~120 kW.

NVIDIA NVL144 – NVIDIA Vera Rubin NVL144 CPX contains 144 Rubin GPUs and 36 Vera CPUs in a single rack providing 8 exaflops of AI performance. provides 7.5x more AI performance than NVIDIA GB300 NVL72 systems: Availability Expected H2 2026

NVIDIA Kyber NVL576 – 576 Rubin Ultra GPUs and 144 Vera CPUs in a single cohesive GPU domain over 2 racks (1 GPU Kyber rack and 1 Kyber side car rack, for power conversion, chillers and monitoring) drawing up to 600kW, provides 15 exaflops at FP4 (Inference) and 5 exaflops at FP8 (Training) Availability Expected H2 2027

NVIDIA BasePOD A reference architecture for building GPU‑accelerated clusters using NVIDIA DGX systems. It provides validated designs for flexible AI and HPC deployments, allowing organisations to choose networking, storage, and supporting infrastructure from different vendors.

NVIDIA SuperPOD A turnkey AI data center solution from NVIDIA, delivering fully integrated GPU clusters at massive scale. It combines DGX systems, high‑speed networking, storage, and NVIDIA software into a complete package for enterprise and research workloads.

Trainium: is a custom AI accelerator ASIC (so even more specialised than a TPU) developed by Amazon Web Services (AWS) to deliver high-performance training and inference for machine learning models at lower power consumption and cost compared to general-purpose GPUs. Designed for deep learning workloads, Trainium offers optimised tensor operations and scalability for large-scale AI training in the cloud.

Smart NIC: is an advanced network interface card that includes onboard processing capabilities, often using programmable CPUs or DPUs, to offload networking, security, and virtualisation tasks from the host CPU. This enables higher performance, lower latency, and improved scalability for data centre and cloud environments by handling functions such as packet processing, encryption, and traffic shaping directly on the NIC.

HBM: (High Bandwidth Memory) dedicated to a GPU, energy efficient, Wide bus up to 1024bits directly on the same package as the GPU minimising latency.

SXM (Server PCI Module) GPU module mounted directly on the motherboard offering superior performance and scalability for large-scale projects like AI training,

PCIe (Peripheral Component Interconnect Express) Standard expansion card inserted into PCIe slots, provides flexibility and wider compatibility, making it suitable for smaller-scale tasks and cost-effectiveness.

CDU – Cooling Distribution Unit is a device used in liquid‑cooled data centers to circulate coolant between the building’s central cooling system (primary loop) and the liquid‑cooled IT equipment (secondary loop). It regulates coolant temperature, pressure, and flow to ensure safe, efficient heat removal from high‑density compute systems. The CDU usually contains the heat exchanger.

Primary cooling loop: This is the ‘Facility level‘ loop, usually operates at hight pressures and volumes serving many racks or an entire data hall. carries facility cooling water (FCW) often mixed with glycol.

Secondary cooling loop: This is the ‘Rack level‘ loop, circulates coolant to absorb heat from GPUs/CPUs and transfer it to the primary loop via a heat exchanger. Coolant from the primary and secondary loops never mix, they are isolated from each other by a heat exchanger.

Coolant: Water (purified/deionized) mixed with glycol (propylene or ethylene) Typical secondary loop fluid is PG25 (25% Propylene glycol, 75% de-ionised water)

Single‑Phase Cooling (Water Cooling): A cooling method where water or treated coolant stays liquid while absorbing heat. The coolant warms up but does not change phase, making it simpler and widely used in today’s liquid‑cooled AI racks.

Two‑Phase Cooling: A cooling method where the coolant boils inside the cold plate, absorbing heat by changing from liquid to vapor. This provides very high heat‑removal efficiency before the coolant condenses back to liquid. A 2 phase coolants boiling point is typically between ~30 °C and 60 °C.

Tooling / Software

NVIDIA Mission Control is an integrated AI‑factory management platform that unifies deployment, monitoring, and orchestration of large‑scale GPU clusters.

It provides a central management plane that automates operations, reduces downtime, and accelerates model development across enterprise AI infrastructure. Built on Kubernetes‑based orchestration, it combines cluster management, workload scheduling, and real‑time system health monitoring for HPC and AI workloads. BCM & NetQ are deployed as part of Mission Control.

Bright Cluster Manager (BCM) is a general-purpose HPC and AI cluster lifecycle management platform, originally developed by Bright Computing (acquired by NVIDIA in 2022), used to provision, configure, and operate multi-vendor CPU and GPU clusters. It handles bare-metal provisioning, node management, monitoring, and integration with schedulers and Kubernetes. Bright is no longer sold as a standalone product and is now delivered as part of NVIDIA’s AI infrastructure stack.

NVIDIA Base Command Manager (BCM) (Not to be confused with Bright above ) is an NVIDIA-specific AI infrastructure management and orchestration layer built on top of Bright Cluster Manager. It adds deep awareness of NVIDIA GPUs, NVLink/NVSwitch topologies, and AI software stacks, and integrates tightly with Kubernetes, the NVIDIA GPU Operator, and NGC. It is designed for operating large-scale NVIDIA AI systems such as DGX, HGX, NVL72, and NVIDIA Cloud Partner (NCP) platforms.

NGC (NVIDIA GPU Cloud) is NVIDIA’s curated catalog of GPU-optimized software, including prebuilt AI and HPC containers, models, libraries, and deployment packages. It enables clusters to run NVIDIA-validated workloads efficiently and reproducibly, and integrates with Base Command Manager for automated deployment on GPU-accelerated systems

NCP (NVIDIA Cloud Partner): An NCP is a service provider approved by NVIDIA to deliver GPU‑accelerated cloud services using a standardised, high‑performance NCP reference architecture.

Slurm: (Simple Linux Utility for Resource Management)

An open‑source workload manager that schedules and runs jobs on clusters of computers. It’s widely used in high‑performance computing (HPC) and AI to allocate resources, manage queues, and coordinate parallel tasks across many nodes.

NVIDIA NetQ is a scalable network‑operations toolset that provides real‑time visibility, troubleshooting, and validation for Cumulus and SONiC‑based data center fabrics.

It collects telemetry from switches and hosts to deliver actionable insights into network health, helping operators quickly detect issues and maintain smooth AI‑fabric operations

NMX‑M (NMX Manager) is NVIDIA’s management tool for monitoring the NVLink/NVSwitch fabric. It collects telemetry from the GPU interconnect, shows health and performance information, and helps operators understand and manage the fabric. It runs as part of the NVIDIA Mission Control software stack.

NCCL (NVIDIA Collective Communication Library, pronounced “Nickel”) — A library developed by NVIDIA that enables fast, efficient communication between GPUs. It provides optimised routines for collective operations such as all‑reduce, broadcast, and gather, making multi‑GPU and multi‑node training in AI and HPC scalable and performant. NCCL runs over NVLink/NVSwitch or PCIe within a node, and InfiniBand or RoCEv2 between nodes, using CUDA under the hood, thus relies on having a compatible CUDA version.

CUDA (Compute Unified Device Architecture): A software platform developed by NVIDIA that enables programs to run thousands of tasks in parallel on GPUs, unlocking massive speedups for computing beyond graphics

MIG (Multi‑Instance GPU) allows a single NVIDIA GPU to be split into seven isolated GPU instances, each with its own dedicated compute cores, memory, and cache. This lets multiple users or workloads run securely and independently on the same physical GPU, improving utilisation and efficiency

Hugging Face: A popular open‑source platform where developers share, build, and deploy AI models and datasets. It provides tools like the Transformers library and hosts millions of pre‑trained models that make it easy to develop AI applications. Think of it like an ‘AI App Store’

Useful Troubleshooting Commands

nvidia‑smi (NVIDIA System Management Interface): A command‑line tool that monitors and manages NVIDIA GPUs, providing real‑time details such as utilization, temperature, memory usage, and running processes. It is built on the NVIDIA Management Library (NVML) and allows administrators to query or modify GPU state on supported NVIDIA hardware. It’s the primary command for GPU debugging.

nvidia-smi topo -m – Displays GPU‑to‑GPU, GPU‑to‑NIC, and interconnect topology including PCIe, NVLink, and NVSwitch distances. Use it to diagnose suboptimal topology or performance issues.

lspci – Shows all PCI devices on a system. Use it to verify that GPUs, NICs (Ethernet/InfiniBand), NVSwitch cards, or DPUs are present and enumerated correctly by the OS.

ethtool – Provides detailed information and configuration options for Ethernet interfaces. Use it to verify link speed, duplex, driver, firmware, and RDMA/RoCE NIC‑related offload settings.

DCGM (Data Center GPU Manager) is NVIDIA’s toolkit for monitoring, managing, and diagnosing GPU health and performance in datacenter environments. It provides telemetry, fault detection, NVLINK statistics, and management APIs to keep large GPU clusters stable and efficient.

DCGMI (Data Center GPU Manager Interface)

dcgmi is the command-line interface for NVIDIA DCGM, used by administrators and automation tools to query, configure, and troubleshoot GPUs.

It allows operators to check GPU health, run diagnostics, view utilisation and errors, and integrate GPU monitoring into scripts and operational workflows.

ipmitool sensor: is a command that reads hardware sensor data exposed through IPMI on a server’s BMC. It displays metrics such as temperatures, voltages, fan speeds, and power readings. It’s commonly used for quick health checks and troubleshooting hardware issues on remote systems

Infiniband / RoCE / RDMA

ibstat – is Displays InfiniBand HCA (Host Channel Adapter) link status. Use it when checking whether IB interfaces are up, active, and negotiating the expected speed.

ibv_devinfo -Shows details about RDMA devices (HCAs), including capabilities, supported link types, and active ports. Use it to verify RDMA stack readiness.

rdma link / rdma dev (rdma-core)

rdma link / rdma dev (rdma-core) – Shows RDMA interface state, GIDs, RoCE modes. Helpful when debugging RoCEv2 mis‑configs or link negotiation

ibping – Tests basic InfiniBand connectivity and fabric reachability.

perfquery / ibqueryerrors – Reads IB port counters to diagnose congestion, packet loss, or fabric errors.

Ethernet / System-level Commands

ip link / ip addr -Shows interface states and addresses. Use it to confirm NICs are up and configured correctly.

ss -tuna – Displays sockets and connections—helpful for verifying GPU jobs aren’t stuck waiting on network flows.

dmesg – Shows kernel logs, often revealing driver issues, PCIe link retraining errors, or GPU resets.

Storage / RoCE Commands

nvme list – Checks attached NVMe drives, often important for local scratch performance on GPU servers.

weka status (if using WekaIO) – Displays cluster and client status when debugging storage throughput issues.

Think I’ve missed any important ones?, let me know in the comments.

Time to get blogging again!

Wow! just noticed it’s been a while since my last post. Took a blogging break during covid and then just never got back in the habit. Well time me-thinks to get back on the horse and start blogging again now we are in a new year! so watch this space!

Sorry I’ve been away so long.

Posted in General

Leave a comment

Cisco Champions Radio: Hyperflex Gets Edgy!

Join me Darren Williams, Daren Fulwell and Lauren Friedman as we discuss the latest innovations with Cisco HyperFlex 4.0

Recorded for Cisco Champions Radio at Cisco Live Europe 2019 Barcelona.

Click the image below for the podcast.

Cisco Intersight Setup and Configuration

I’m sure by now you have heard of Cisco Intersight. Intersight is Cisco’s SaaS offering for monitoring and managing all your Cisco UCS and Hyperflex platforms from a single cloud based GUI. And the best part is the base licence and functionality is completely free!

This video walks you through the simple steps for setting up your Cisco Intersight account and registering your devices!

Let me know in the comments if you are using Cisco Intersight and how you are finding it. I for instance now have complete visibility of all our Cisco UCS and Hyperflex systems from my mobile phone and it doesn’t cost a penny!