I noticed last week that Cisco have just released the eagerly awaited Cisco UCS 3.1 code “Granada” on CCO.

Whenever a major or a minor code drop appears the first thing I always do is read the Release Notes , to see what new features the code supports or which bugs it addresses. Well in reading the 3.1 release notes it was like reading a Christmas list. There are far too many new features and enhancements to delve into in this post, so I will just be calling out the ones that most caught my eye

Cisco UCS Gen 3 Hardware

At the top of the release notes is the announcement that 3.1 code supports the soon to be released Cisco UCS Generation 3 Hardware.

With the new Gen 3 products the UCS infrastructure now supports full end to end native 40GbE.

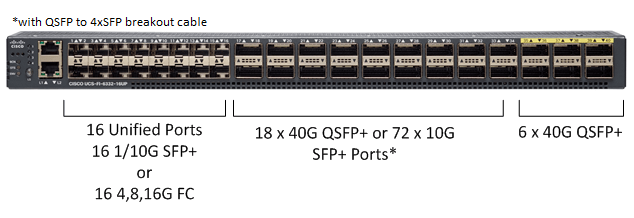

The Generation 3 Fabric Interconnects come in 2 models the 6332 which is a 32 Port 40G Ethernet/FCoE Interconnect. And the 6332-16UP which as its model number would suggest comes with 16 Unified ports, which can run as 1/10G Ethernet/FCoE or as 4,8,16G Native Fibre Channel.

The 6 x 40G Ports at the end of the Interconnects do not support breaking out to 4 x 10G ports, and are best used as 40G Network uplink ports.

The Gen 3 FI’s are a variant of the Nexus 9332 platform and although the Application Leaf Eungine (ALE ) ASIC which would allow them to act as a leaf node in a Cisco ACI Fabric is present, that functionality is not expected until the 4th Gen FI.

Cisco 6332 Fabric Interconnect

Cisco 6332-16UP Fabric Interconnect

6300 Fabric Interconnect Comparison

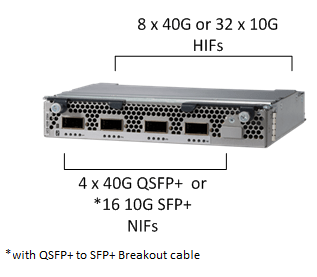

Now that there is a native 40G Fabric Interconnect we obviously need a native 40G Fabric Extender / IO Module, well the UCS-IOM-2304 is it. 4 x Native 40G Network Interfaces (NIFs) and 8 x Native 40G Host Interfaces (HIFs), one 40G HIF to each blade slot. These 40G HIFs are actually made up of 4 x 10G HIFs but with the correct combination of hardware are combined to a single native 40G HIF.

Now as the purists will note in Network speak 1x native 40G is not the same as 4x10G, It’s a speed vs. bandwidth comparison. The way I like to explain it, is if you have a tube of factor 40 sun lotion, and 4 tubes of factor 10, they are not the same thing, 4 x 10 in this case does not equal 40, you just have 4 times as much 10 🙂 It’s a similar analogy when comparing Native 40G Speed & BW and 40G BW made up of 4 x 10G speed links. Hope that makes sense.

Cisco 2304 IO Module

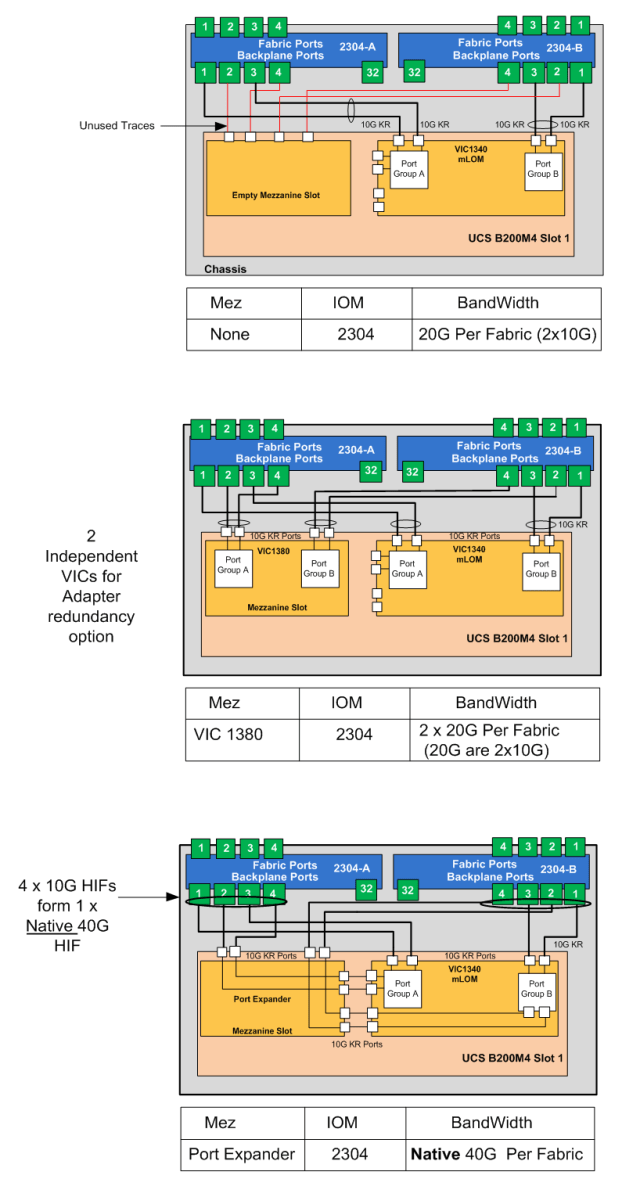

As mentioned above depending on the hardware combination you have, will give differing speed and bandwidth options. The three diagrams below show the three supported options.

One Code to Rule Them All.

Next up is that UCSM 3.1 is a Unified code, which supports B-Series, C-Series and M-Series all within the same UCS Domain as well as support for UCS Mini and all this in a single code release.

HTML5 Interface.

I’m sure you have all shared my pain with Java in certain situations, so you will be glad to hear that UCSM 3.1 offers an HTML5 user interface on all supported Fabric Interconnects. (6248UP,6296UP,6324,6332 and 6332 16UP) 6100 FI and UCS Gen 1 Hardware is no longer supported as of UCSM 3.1(1e) so do not upgrade to 3.1 you will need to stay on UCSM 2.2 or earlier until you have removed all of your Gen 1 hardware!. (refer to release notes for full list of depreciated hardware)

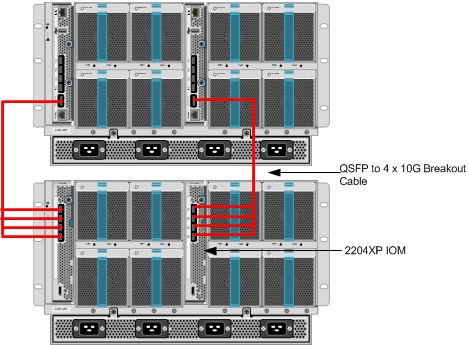

Support for UCS Mini Secondary Chassis.

I have deployed UCS mini in various situations mainly DMZ, Specialised Pods like PCI-DSS workloads or branch office, and I while 8 Blades and 7 rack mounts has always been enough in many cases an “all blade solution” would have been preferred , well now UCS mini can scale to 16 blades. , You can now daisy chain a second UCS Classic chassis from the 6324FI allowing for an additional 8 blades. You can either use the 10G ports for the secondary chassis or with the addition of the QSFP Scalability Port License you can break out the 40G scalability port to 4 x 10G, as shown below.

UCS Mini Secondary Chassis

Honourable Mentions.

- There is now an Nvidia M6 GPU for the B200M4 which would provide a nice graphic intensive VDI optimized blade solution.

- New “On Next Boot” maintenance policy that will apply any pending changes on an operating system initiated reboot.

- Firmware Auto Install will now check the status of all vif paths before and after the upgrade and report on any difference.

- Locator LED support for server hard-disks.

Well that about covers it in this update, am looking forward to having a good play with this release and the new hardware when it comes out.

Until next time

Colin

Great post Colin.

Congrats.

Bruno Bortolato

One of my fave new features: VIF status no longer reported as down when service profile is associated but OS is shutdown or server is powered off.

Nice post Colin !

I’m confused with the math ?

6332: 26 x 40G or 98 10G ? I would have thought 26 x 4 = 104 ?

in the case of

6332-16UP: 18 x 40G or 72 x 10G is ok 18 x 4 = 72

Thanks for any clarification

Hi Walter

Hope you are well, great to hear from you!

Wow you have a great eye for detail 🙂

The reason is ports 13 & 14 do not support breakout to 4 x 10G, if you want to run ports 13 and 14 as 10G you need to use a QSA module

which only gives you 1:1

so the Math is actually 24 x 4 = 96 + 2 = 98

Regards

Colin

Perfect, Thanks Colin.

I’ve seen the comment in the Cisco presentation:

* QSA module required on ports 13-14 to provide 10G support

** Requires QSFP to 4xSFP breakout cable

Reblogged this on @greatwhitetec and commented:

Worth a read…

Very helpful update. Thanks!

Colin,

Do you know if the 2304’s are compatible with existing 5108 Chassis? Is there a particular generation of Chassis we would need for these? Thanks!

Do you know if the 2304’s are compatible with existing 5108 chassis?

Hi Allen

No both the Original 5108 )Santa Clara) and newer AC2 (San Martin Chassis) are compatible with the 2304. Certain features of the 3.1 code like peer IO module reset do rely on the internal traces within the newer San Martin Chassis.

Regards

Colin

Great blog! A few things I’ve noticed about the 6332 / 6332-16UP:

* the 6332 is expensive and the 2304 is really expensive. They only begin to make financial sense with 7+ chassis or for 80+ Gbps from each chassis to each fabric.

* despite the high FI cost, port licensing is a bit cheaper, especially for direct-attach C-Series and breakout ports.

* any config changes to port breakout, system QoS, or unified port mode require a reboot.

* the release notes call out a limit in the number of breakout ports when jumbo frames are used. There’s a discussion and screenshots about this at communities.cisco.com.

* You point out that Cisco has already publicly announced a “4th Gen FI” expected later this year. WTF?! That doesn’t bode well for the third gen FI!

What about these slides… (btw.: published in Feb 2015)… focusing on slide 40, it states that in a next step ACI-Integration will be realized with the “3rd Gen FI” with 6300. Do you have further information that this “Phase 3” was moved to the “4th Gen FI”?

Click to access UCS_and_ACI_Integration.pdf

I recall those slides and the confidence they imbued. Unfortunately, the news of a 4th gen FI was leaked during 6332 launch training last month. It was a surprise to many!

My guess is that the first-gen, Broadcom-powered Nexus 9300 (which the 6332 is based on) was not going to be able to fully support UCS and ACI together. Perhaps this was due to the limited buffers, VXLAN/FEX incompatibilities, end-host-mode/EPG integration, or perhaps something else entirely.

Meanwhile, Cisco is just now releasing the second generation Nexus 9300EX switches, powered by a Cisco ASIC engineered to handle all the things ACI, UCS, and NX/OS. Whatever showstoppers Cisco ran into with the 9300 is presumably fixed with the 9300EX.

This is public information about the forthcoming FI, which is ACI leaf capable.

https://communities.cisco.com/docs/DOC-65743

ACI and Nexus 9000 Announcement Preview

Recording from February 4th, 2016 where Joe Onisick, Principal Engineer – Technical Marketing for the Cisco Insieme Business Unit previews upcoming product announcements for the Nexus 9000 and ACI, and answers your questions.

Current 3. gen FI (6332) is and will never be ACI leaf capable.

New ACI leaf capable FI (4. gen, or 3.5 gen) will be announced 2nd half of CY 2016 (July / August 2016). This box will be lower cost than the 6332.

Customers who are interested integrating UCS into ACI should wait for this FI; it will not only shorten the network by one hop, but also allow APIC to provsion UCS servers. If you are not interested in ACI, go with 6332.

Pingback: Cisco HyperFlexes its muscles. | UCSguru.com

Hi Walter

According to Cisco UCS Manager Infrastructure Management Guide, Release 3.1

if you want to daisy chain a second UCS Classic chassis from the 6324FI allowing for an additional 8 blades

Only scalability port is supported to connect exsiting Primary UCS Chassis.

I saw you posting that

“You can either use the 10G ports for the secondary chassis or with the addition of the QSFP Scalability Port” which I think is wrong.

Though I tried to add Secondary Chassis through 10Gb Port , I could not Find Secondary Chassis .

Acutually , after I moved this 10Gb Port to Scalability Port , I solved the problem.

Please confirm this Document

http://www.cisco.com/c/en/us/td/docs/unified_computing/ucs/ucs-manager/GUI-User-Guides/Infrastructure-Mgmt/3-1/b_UCSM_GUI_Infrastructure_Management_Guide_3_1/b_UCSM_GUI_Infrastructure_Management_Guide_3_1_chapter_010.html

“Cisco UCS Manager Release 3.1(1) introduces support for an extended UCS 5108 chassis to an existing single-chassis Cisco UCS 6324 fabric interconnect setup. This extended chassis enables you to configure an additional 8 servers. Unlike the primary chassis, the extended chassis supports IOMs. Currently, it supports UCS-IOM-2204XP and UCS-IOM-2208XP IOMs. The extended chassis can only be connected through the scalability port on the FI-IOM.”

Hi Colin, long time lurker, first time posting. Great Blog BTW!

I have a question regarding Cisco FlexFlash. From what I’ve read if you dont purchase SD cards with your server the FlexFlash conrtoller ISO will not be installed.

My example is I have a C240 M3 with a SAS 9271-8i which is working and shows up under the Storage tab of UCSM. but there is no Flexflash.

Any idea? Thanks

Hi Tyler

I have certainly added FlexFlash to servers in the field.

The issue you may be having is that on first use you need to configure a one time only scrub policy to format the SD cards and create the Mirror policy (if you have 2 installed)

details on this can be found here.

http://www.cisco.com/c/en/us/products/collateral/servers-unified-computing/ucs-c-series-rack-servers/whitepaper-c11-734876.html

Regards

Colin

Hi again Colin,

Can you please help me, I cant seem to sort out an issue I’m having.

Trying to associate a service profile to a B200-M3 blade with a ViC 1240 adapter.

it is failing at 25%

Remote Invocation Result: End Point Unavailable

Remote Invocation Error Code: 869

Remote Invocation Description: Adapter:unconfigAllNic failed

seems the DCE is not seeing the B-Side of the chassis

please help!

Is it possible to use the iom 2304’s with the 6248’s?

Yes with 3.2(3b) see below matrix

https://www.cisco.com/c/en/us/td/docs/unified_computing/ucs/release/notes/CiscoUCSManager-RN-3-2.html#reference_93EC529654A14701B98253AC3C22A28C