Following on from last weeks big announcements and the teaser on the new M4 line-up I am pleased to say I can now post the details of that new line-up.

The new M4 servers, are based on the Intel Grantley-EP Platform, incorporating the latest Intel E5-2600 v3 (Haswell EP) processors. These new processors are available with up to an incredible 18 Cores per socket and support memory speeds up to a blistering 2133MHz.

Which in real terms means far denser virtualised environments and higher performing bare metal environments, which equates to less compute nodes required for the same job, and all the other efficiencies having a reduced footprint brings.

The new models being announced today are:

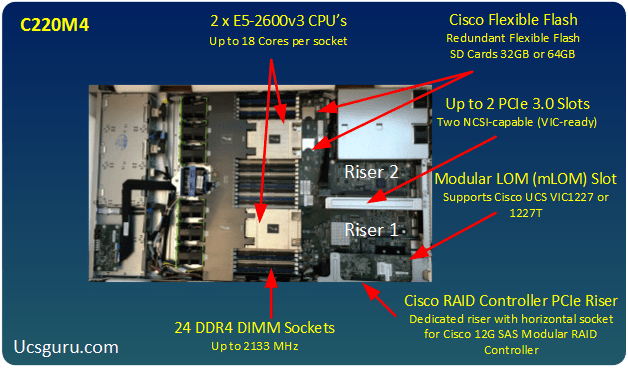

C220M4

The stand out details for me here, are that the two new members of the C-Series Rack Mount family now come with a Modular LAN on Motherboard (mLOM) the VIC 1227 (SFP) and the VIC 1227T (10GBaseT). Which means this frees up a valuable PCIe 3.0 slot.

The C220M4 has an additional 2 x PCIe 3.0 Slots which could be used for additional I/O like the VIC1285 40Gb adapter or the new Gen 3 40Gb VIC1385 adapter. The PCIe slots also support Graphic Processing Units (GPU) for graphics intensive VDI solutions as well as PCIe Flash based UCS Storage accelerators.

C240M4

In addition to all the goodness you get with the C220 the C240 offers 6 PCIe 3.0 Slots, 4 of which are full height, full length which should really open up the 3rd party card offerings.

Also worth noting that in addition to the standard Cisco Flexible Flash SD cards, the C240 M4 also has an optional 2 internal small form factor (SFF) SATA drives. Ideal if you want to keep a large foot printed operating system physically separate from your front facing drives.

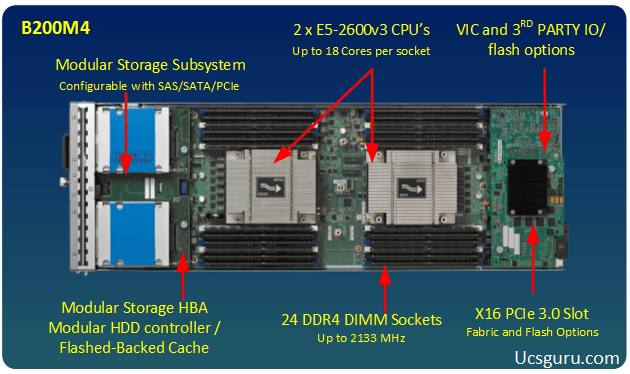

B200M4

And now for my favourite member of the Cisco UCS Server family, the trusty, versatile B200, great to see this blade get “pimped” with all the M4 goodness, as well as some great additional innovation.

So on top of the E5-2600v3 CPU’s supporting up to 18 Cores per socket, the ultra-fast 2133MHz DDR4 Memory, as well as the new 40Gb ready VIC 1340 mLOM, what I quite like about the B200M4 is the new “FlexStorage” Modular storage system.

Many of my clients love the statelessness aspects of Cisco UCS and to exploit this to the Max, most remote boot. And while none of them have ever said, “Colin it’s a bit of a waste, I’m having to pay for, and power an embedded RAID controller, when I’m not even using it”, well now they don’t have to, as the drives and storage controllers are now modular and can be ordered separately if required, or omitted completely if not.

But if after re-mortgaging your house you still can’t afford the very pricey DDR4 Memory, worry ye not, as the M3 DDR3 Blades and Rack mounts certainly aren’t going anywhere, anytime soon.

Until next time.

Take care of that Data Center of yours!

Colin

Dear UCSguru,

I am in a confusion regarding alert throttling feature of UCS.

I am having a power supply unit faulty in my device.

My confusion is that sometimes I get repeated mails of the PSU failure while for some hours the alerts doesn,t come at all.

Kindly explain the underlying principle of alert generation and throttling.

Because, as a textbook behavior the alerts should come at a fixed interval i.e. whenever the system runs the diagnostics it should send the alerts. but the timings of alert are irregular and not resembling any patterns.

Regards,

Shiv Shambhu

shivshambhuyadav@gmail.com

Hi Shiv

The Call Home throttling feature is designed to filter out multiple call home messages for the same event,in order to save your inbox getting flooded. Throttling is enabled by default.

With regards to call home message generation intervals, as far as I am ware the call home message is generated at the same time at the alert is generated (As long as the fault is call home enabled in the call home policy)

I would compare your call home messages to the UCS System Event Log (SEL) and confirm you are getting them at the same time al-be-it less instances due to the throttling.

Regards

Colin

Dear UCS Guru,

Kindly let me how this Modular Storage HBA is going to help the customers who are planning to buy UCS B200 M4.

Thanks.

Simple answer is cost and power.

Most clients boot from SAN so buying and powering an un-necessary component in every one of there Servers could be termed an inefficient waste.

Now they don’t have to.

Thanks for the question.

Colin

What percentage of your customers boot from SAN? Have they run into any SAN blips that cause the OS to crash?

Ben

Hi Ben

I would say about 90% of the UCS Designs and Implementations I do are boot from SAN.

In all that time I’ve never really seen any issues.

However last month I had a client that was comparing SAN boot to SD Card boot, and they turned off

Both Fabrics

at the same time. Akin to ungracefully just pulling out your hard drive from a running machine.

In this case 2 out of the 10 BFS Servers ended up with a corruption of the ESXi instance on the boot LUN, All 10 of the SD Card booted servers recovered OK.

My client raised this with me, to which my answer was , why would you ever turn off both fabrics for an HA test? just to one at a time and all will be fine.

Regards

Colin

Hi Colin,

Planning to have pairs of SD cards (FlexFlash RAID1) for the new M4s. Is that something you commonly see?

Thanks

Hi Jeff

Boot from SAN is still by far the most common, but I have recently done a couple of boot from mirrored FlexFlash card deployments.

One use case was VMware auto deploy and the other the client just prefered to boot from the SD cards.

Remember to set the Raid 1 FlexFlash Policy, and A FlexFlash Scrub on the first boot to establish the mirror.

Colin

Hi UCS Guru,

We have VMware farm in UCs environment and recently we have added one more domain.

We have ESXi host from both the UCS domain on same cluster and we are thinking how the VMotion traffic will flow from ESXi host from domain 1 to domain 2. Will that take much time or genrate traffic during the vmotion since it has follow the long chain of sending the packet from one UCS FI to another UCS FI and then go to VMotion VMkernel port.

Hence we are planning to have all ESXi host in a cluster from same UCS domain so that traffic should be with in the FI only.

Can you please help us to understand how the VMotion traffic flow from one UCS domain to another UCS domain in such scneraio and how much beneficial it would be if we keep host on same UCS domain for a cluster.

Environment :- UCS FI 6248 (Domain1); UCS FI 6296 (Domain 2) N5k and N7k as physical switch, Nk1v DVs in VMware Farm and both domain are added in Central.

Any detail explaination of the traffic and suggestion will be highly appreciable.

Hi

Ok the main thing to remember is a Cisco UCS Fabric Interconnect can only locally switch traffic at Layer 2 (within the same subnet) and only then if both the source and destination MAC addresses within the same fabric (A or B) VM’s residing in the same host could be locally switched within the Nexus 1000v.

So an inter UCS Domain vMotion would exit UCS Domain 1, be sent up the relevant uplink that the source machines vMotion vNIC is pinned to. the traffic would then hit your N5K layer and then the N5K could locally switch the traffic down to UCS Domain 2 (assuming Domain 1 and Domain 2 are connected to the same 5K)

Of course having all your hosts residing within the same UCS Domain, and restricting vMotion to use a single fabric at any one time would optimise the vMotion traffic, which as you say could then just be hairpinned round within the fabric interconnect.

But at the end of the day it is common place to vMotion across networks these days, I would ask if you are expeiencing any issues either latentcy or bandwidth related with your current setup? if so this could perhaps be better addressed by correctly priorising the vMotion traffic with QoS polices.

Regards

Colin

Hi,

In a boot from SAN scenario where you have B200M3 booting ESXi 5.1u2 with VIC1240(EXP), could you add B200M4 with VIC1240(EXP) and swap the UCS service profile between the two? Would different CPU versions or RAM (DDR3 vs DDR4) be an issue or would it be seamless?

Hi Cam

The Boot policy is certainly blade model independent, as long as you have called your vHBA’s the same thing in the service profile e.g fc0 and fc1.

With regards to the whole Service Profile being able to switch between blade models, there would be no issue as long as you are not referencing a Bios Policy feature which is available on one but not the other.

This ability to move SP’s to different blade models, is great for temporarily Flexing (up or down) the environment or conducting upgrades/downgrades of the blades on which the workloads are associated.

The only time I have had “Issues” is when moving Service Profiles between half width and full width blades containing 2 Mezzanine cards. In this case you just need to configure PCI placement policies to ensure that all your vNICs appear in the same order regardless of the blade.

Regards

Colin

Regards

Colin